In our second installment of the Kubernetes Observability tutorial series, we’ll dive deep into monitoring all facets of your applications operating within a Kubernetes environment. This includes a comprehensive examination of the following:

- Ingesting and analysing logs

- performance collection and health metrics

- Monitoring application performance with Elastic APM

To aid us in this endeavor, our toolkit for this endeavor will include Elastic Observability. This will enable us to perform an in-depth analysis of container metrics in Kibana using the Metrics app and readily available dashboards.”

In this revised version, transition words like “additionally,” “to begin with,” “furthermore,” and “in addition to that” have been added to improve the flow and coherence of the text.

Collection of Metrices from Kubernetes

Much like the objectives of Kubernetes logs, the task of collecting Kubernetes metrics poses certain challenges:

-

Kubernetes deployments may span multiple hosts, each requiring monitoring for metrics like CPU usage, memory consumption, disk utilization, and network I/O.

-

Kubernetes containers, which operate like mini virtual machines, generate their own unique set of metrics.

-

Applications running within Kubernetes, including both application servers and databases, often employ distinct reporting methods.

Managing Kubernetes observability can become intricate, especially in organizations utilizing a variety of technologies for metric handling. An effective solution is to leverage Elastic Observability, which amalgamates log and APM data for comprehensive analysis and enhanced visibility.

Collection of K8s metrics with Metricbeat

Metricbeat is similar to Filebeat and is the only component we can use for collection of various metrics from the pods running in our Kubernetes cluster, as well as Kubernetes’ own cluster metrics. Its modules gives a quick and easy way for picking up metrics from various sources and shipping them to Elasticsearch as ECS-compatible events, which are then corelated to logs, uptime and APM data. Metricbeat is deployed on Kubernetes in following two ways:

- A single pod can be used for collection of Kubernetes metrics. It uses kube-state-metrics for collection of cluster-level metrics.

- Metricbeat can be deployed as a single instance by Daemonset. It is used for collecting metrices from pods which are deployed on some host.Metricbeat communicates with kubelet APIs for getting the components which are running on that host.It uses different method such as auto-detection for further asking the components for collection of technology-specific metrics.

Before you get started: The following tutorial has a Kubernetes environment setup. We’ve created a supplementary blog that walks you through the process of setting up a single-node Minikube environment with a demo application for running the rest of the activities.

Collection of host, Docker, and Kubernetes metrics

Every DaemonSet instance is used to collect host, Docker and Kubernetes metrics, are defined in following way in the YAML

config $HOME/k8s-o11y-workshop/metricbeat/metricbeat.yml:

System (host) metric configuration

system.yml: |-

- module: system

period: 10s

metricsets:

- cpu

- load

- memory

- network

- process

- process_summary

- core

- diskio

# - socket

processes: ['.*']

process.include_top_n:

by_cpu: 5 # include top 5 processes by CPU

by_memory: 5 # include top 5 processes by memory

- module: system

period: 1m

metricsets:

- filesystem

- fsstat

processors:

- drop_event.when.regexp:

system.filesystem.mount_point: '^/(sys|cgroup|proc|dev|etc|host|lib)($|/)'

Metric Configuration of Docker

docker.yml: |-

- module: docker

metricsets:

- "container"

- "cpu"

- "diskio"

- "event"

- "healthcheck"

- "info"

# - "image"

- "memory"

- "network"

hosts: ["unix:///var/run/docker.sock"]

period: 10s

enabled: true

Metrics Configuration of Kubernetes

it has metrics collection from pods deployed onto the host after communication with kubelet API:

kubernetes.yml: |-

- module: kubernetes

metricsets:

- node

- system

- pod

- container

- volume

period: 10s

host: ${NODE_NAME}

hosts: ["localhost:10255"]

- module: kubernetes

metricsets:

- proxy

period: 10s

host: ${NODE_NAME}

hosts: ["localhost:10249"]

check out the Metricbeat documentation for more information about Metricbeat modules and data behind the metricsets,

Collection of Kubernetes state metrics and events

A single instance is used for collection of kubernets metrices which are deployed to be joined with metrices API for monitering the changes in state of objects. This defines the config:

$HOME/k8s-o11y-workshop/Metricbeat/Metricbeat.yml:

kubernetes.yml: |-

- module: kubernetes

metricsets:

- state_node

- state_deployment

- state_replicaset

- state_pod

- state_container

# Uncomment this to get k8s events:

- event

period: 10s

host: ${NODE_NAME}

hosts: ["kube-state-metrics:8080"]

Autodiscovery of Metricbeat by the USE of Pod Annotations

The deployment of Metricbeat DaemonSet can autodetect the components running in the pods and application of the modules of Metricbeat for collection of metrices which are specific to technology. Pod annotations can be used for enabling the autodiscovery , it indicates modules for specific configurations.This section of Metricbeat config enables Kubernetes-based autodiscovery.

$HOME/k8s-o11y-workshop/metricbeat/metricbeat.yml:

metricbeat.autodiscover:

providers:

- type: kubernetes

host: ${NODE_NAME}

hints.enabled: true

There are two components used for autodiscovery:

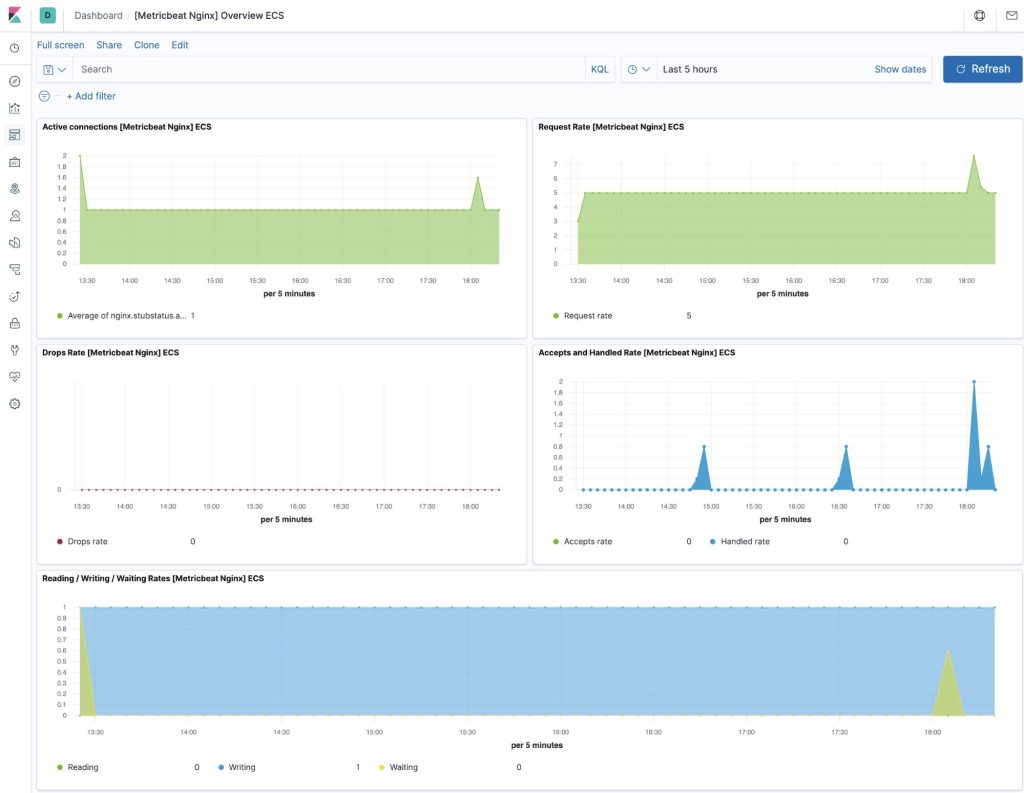

- NGINX definition $HOME/k8s-o11y-workshop/nginx/nginx.yml

template:

metadata:

labels:

app: nginx

annotations:

co.elastic.metrics/module: nginx

co.elastic.metrics/hosts: '${data.host}:${data.port}'

- MySQL definition

$HOME/k8s-o11y-workshop/mysql/mysql.yml

template:

metadata:

labels:

app: mysql

annotations:

co.elastic.metrics/module: mysql

co.elastic.metrics/hosts: 'root:petclinic@tcp(${data.host}:${data.port})/'

See the Metricbeat documentation for more information .

Collection of application metrics, Prometheus-style

The Spring Boot petclinic application shows a range of application-specific metrics which are exposed in a form that can be scraped by Prometheus. You can navigate to the application http endpoint at http://<public-ip>:30080/metrics/Prometheus to check how the metrics are reported. we will be using Metricbeat for collection of these metrics and using Elastic components for all our observability needs.

Here is an example of what our application reports:

This configuration hints in petclinic YAML deployment config to tell Metricbeat to collect these metrics using the Prometheus module.

$HOME/k8s-o11y-workshop/petclinic/petclinic.yml:

template:

metadata:

labels:

app: petclinic

annotations:

co.elastic.metrics/module: prometheus

co.elastic.metrics/hosts: '${data.host}:${data.port}'

co.elastic.metrics/metrics_path: '/metrics/prometheus'

co.elastic.metrics/period: 1m

Generally, Metricbeat can add up or replace the Prometheus server . If it is already deployed and using Prometheus server, Metricbeat can be used to send the metrics out of the server with the help of Prometheus Federation API, and provides visibility across multiple Prometheus servers, Kubernetes namespaces and clusters, enables correlation of Prometheus metrics with Logs, APM and uptime events. If a simplified monitoring architecture is needed then we can use Metricbeat for collecting Prometheus metrics and send them straight into Elasticsearch.

Enrichment of Metadata

The following processors help all the events collected by Metricbeat to be enriched by.

$HOME/k8s-o11y-workshop/metricbeat/metricbeat.yml:

processors: - add_cloud_metadata: - add_host_metadata: - add_kubernetes_metadata: - add_docker_metadata:

Utilizing Kubernetes observability is instrumental in establishing meaningful correlations between metrics and various components such as hosts, Kubernetes pods, Docker containers, and cloud-provider infrastructure metadata. This integration also extends to other essential observability elements, including application performance monitoring data and logs. By connecting these dots, you can gain a comprehensive understanding of your system’s performance and health.

Metrics in Kibana

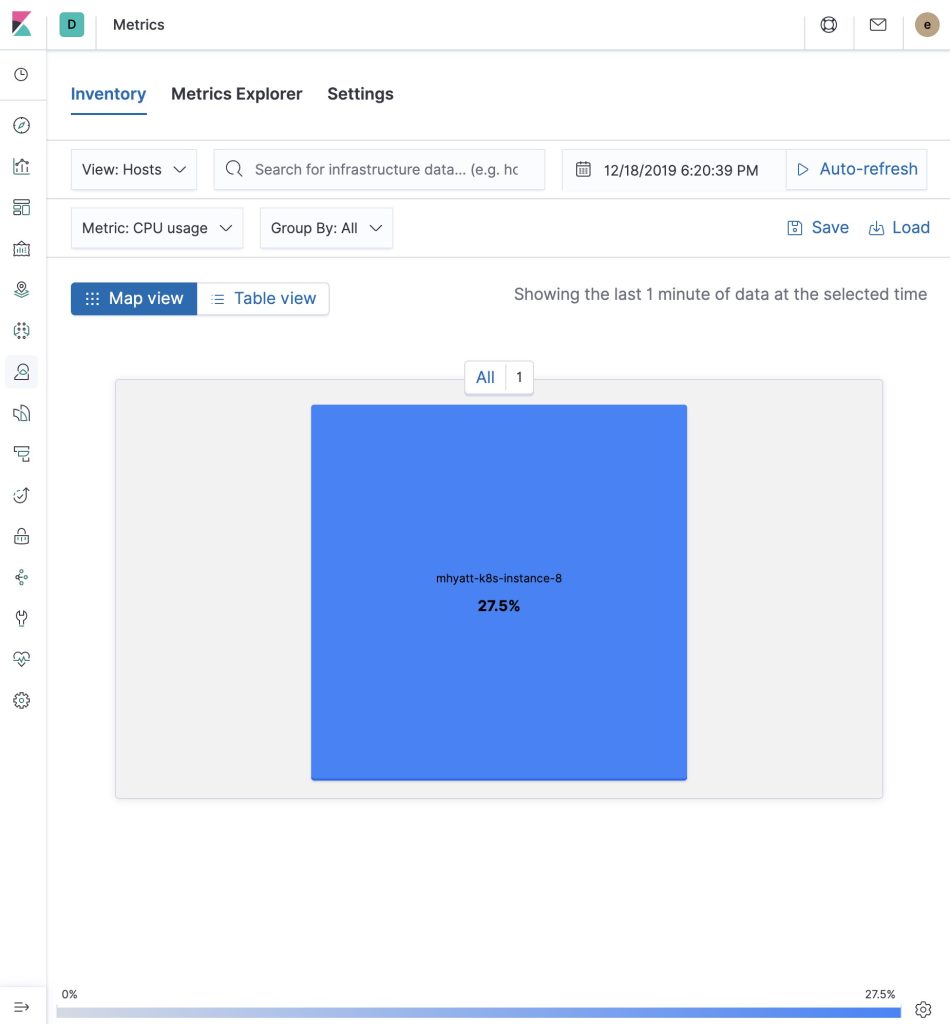

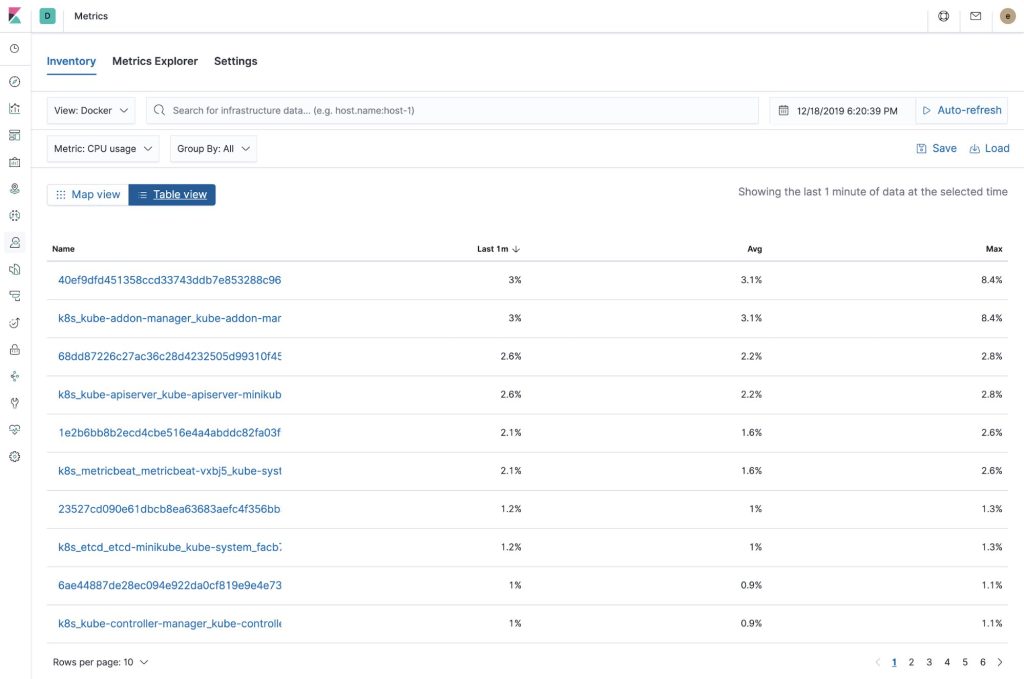

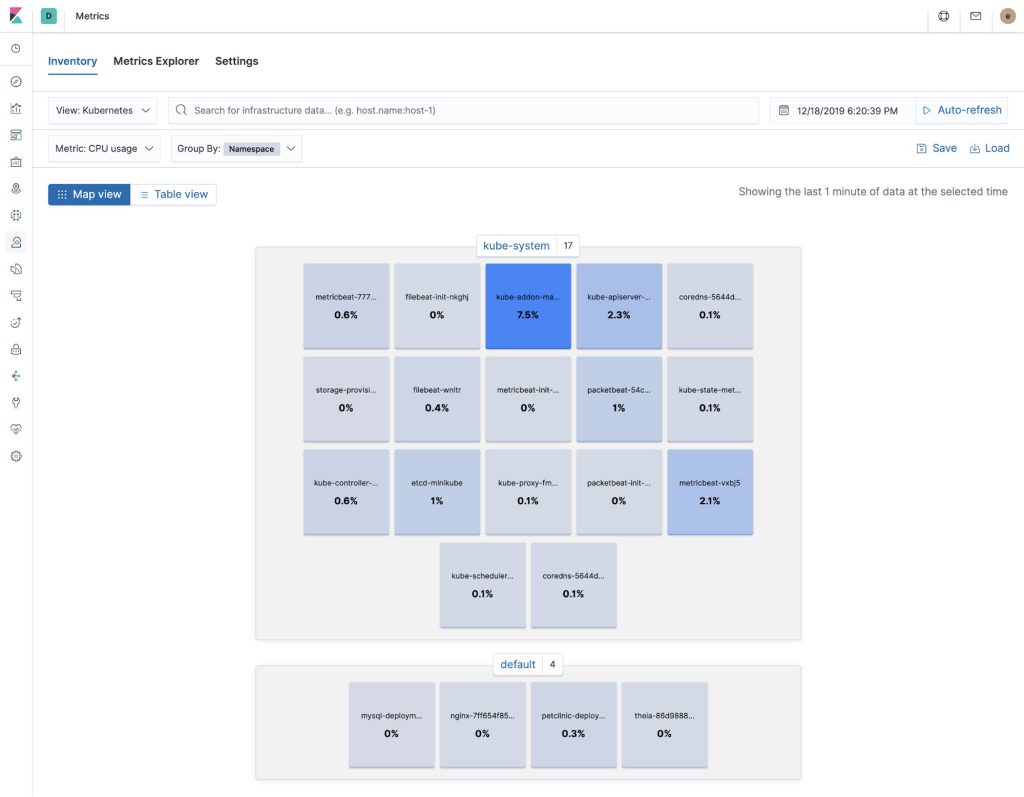

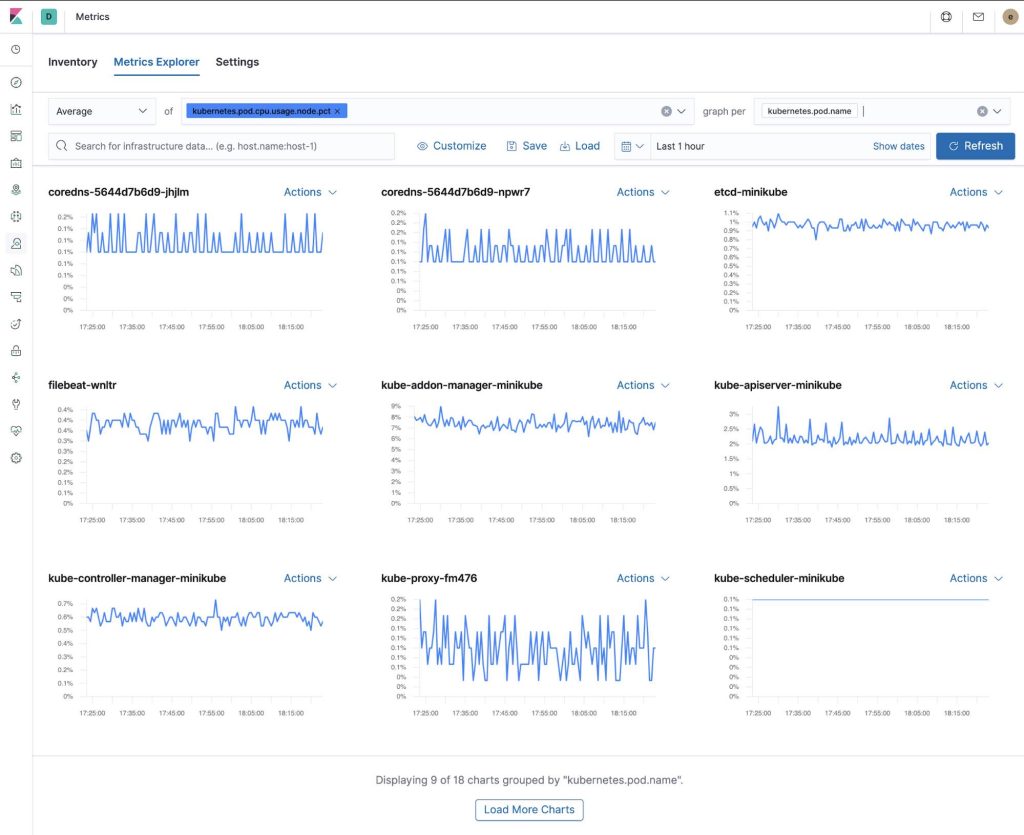

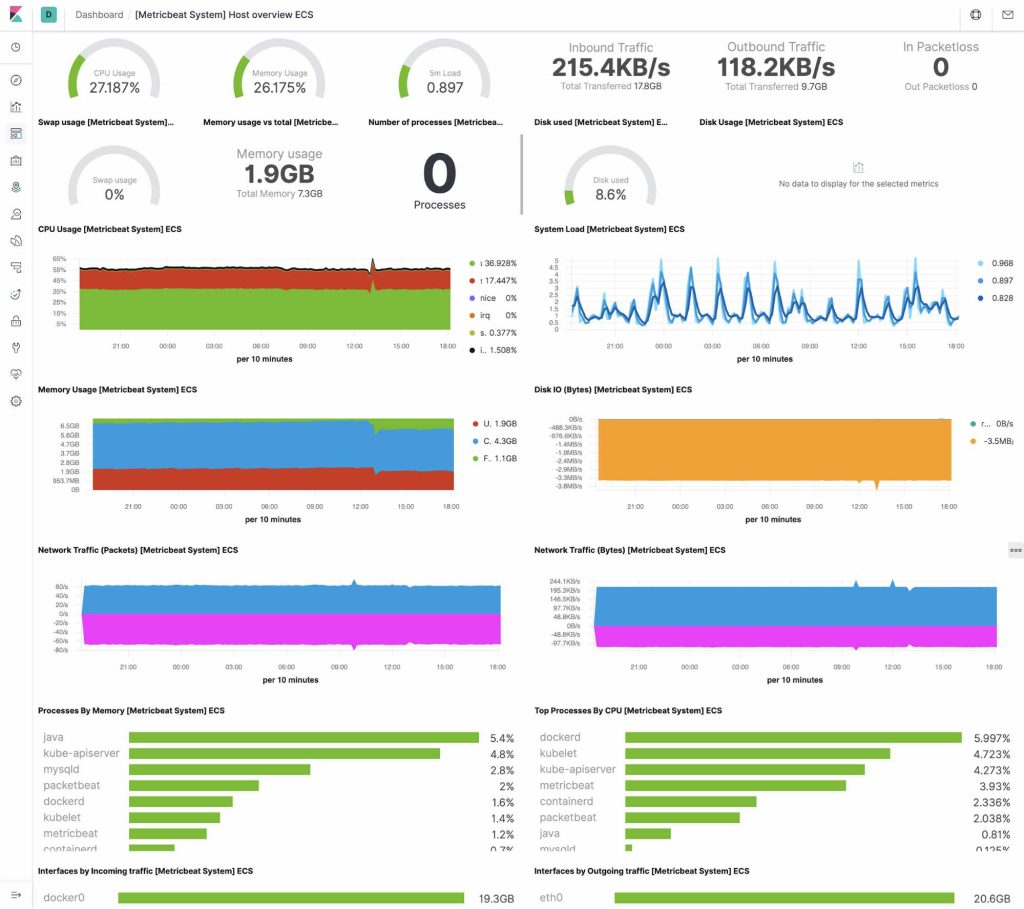

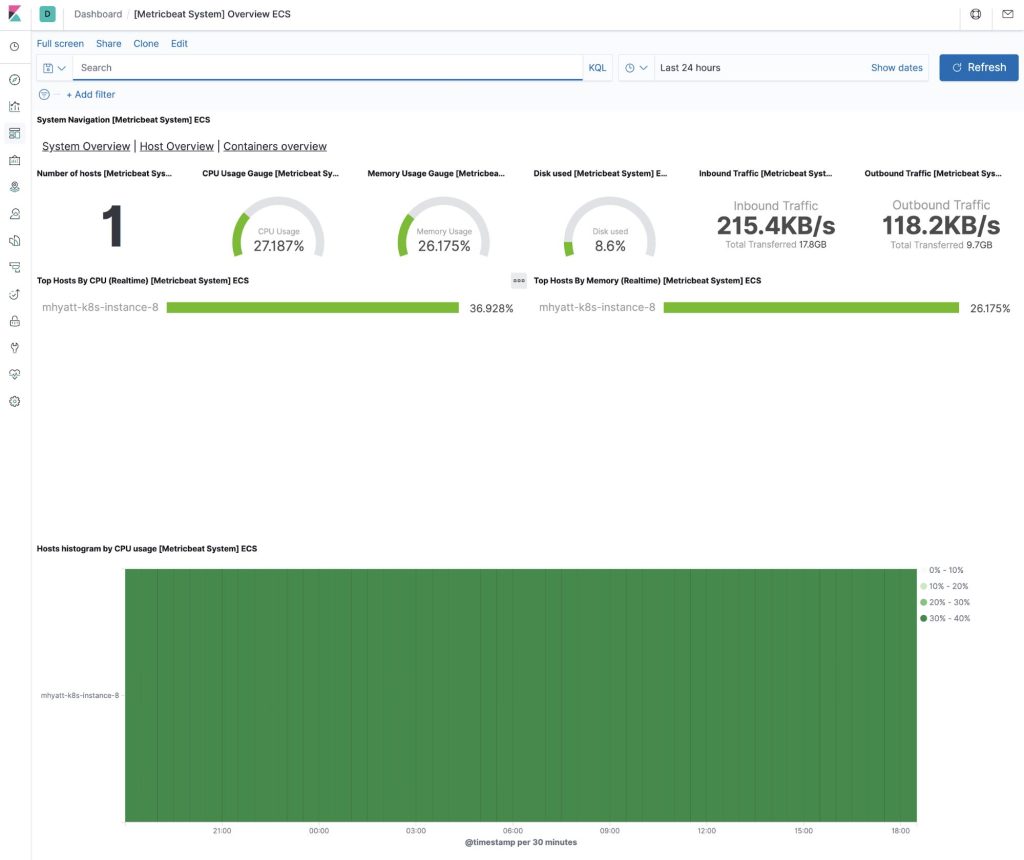

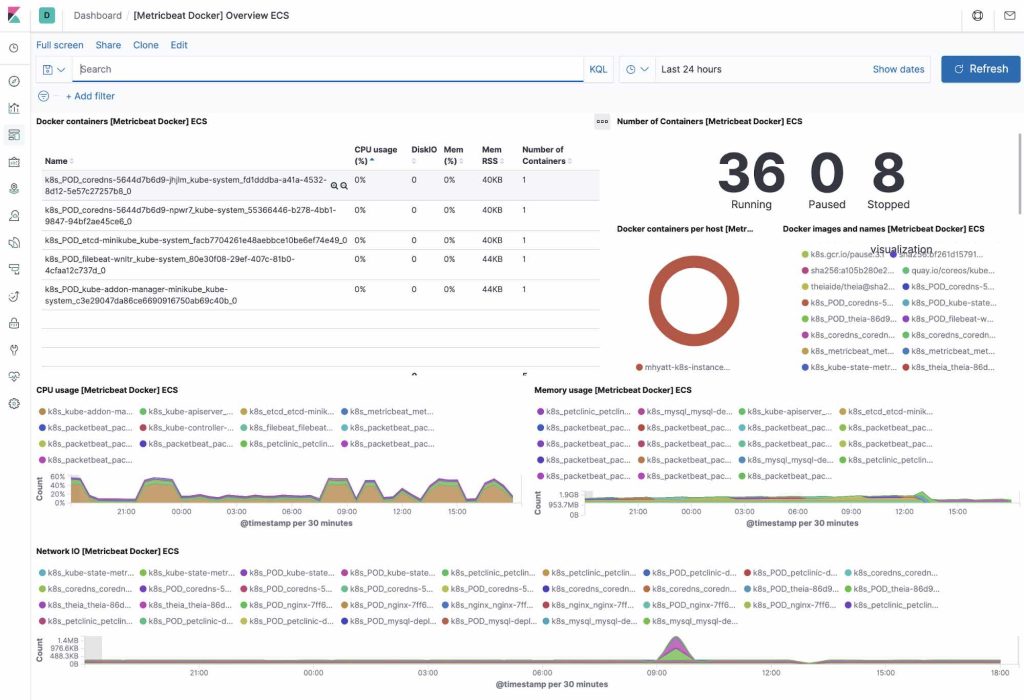

The configuration of Metricbeat shows the following views in the Metrics app. . Kibana helps to search things with its search bar.It helps in filtering and zomming things when we are looking for some things. We have used only one host, see below:

Host infrastructure metrics

Docker infra and metrics (table view)

Kubernetes infra and metrics

Metrics explorer

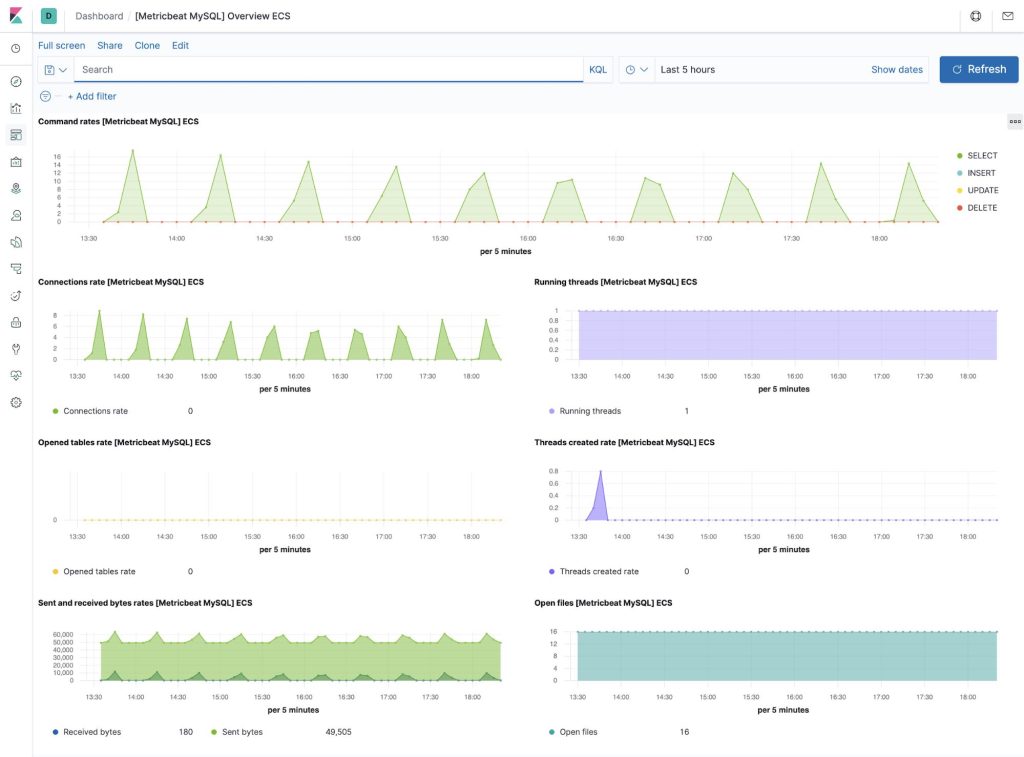

Out-of-the-box Kibana dashboards

Metricbeat has many of pre-built Kibana dashboards which we can easily be added to your cluster with a single command. We can use these dashboards as it is, or as a point to start custom dashboards tailored in the way we want . See the below dashboards:

Host

System

Docker

NGINX

MySQL

CONCLUSION

In this article, we saw a collecting application and Kubernetes metrics with Metricbeat. We can start monitoring our systems and infrastructure today. Sign up for a free trial of Elasticsearch Service on Elastic Cloud, or download the Elastic Stack and host it yourself.

Once your system is up and running, it’s essential to ensure its health and performance through Kubernetes observability. Monitor host availability using uptime monitoring and track application performance on your hosts with Elastic APM. This comprehensive approach creates an observable system seamlessly integrated with your new metrics cluster. If you encounter any challenges or have inquiries, don’t hesitate to reach out in the comments section—we’re here to assist you with Kubernetes observability and more.